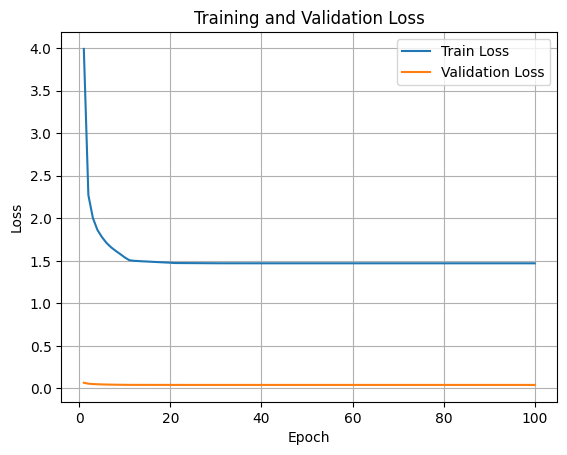

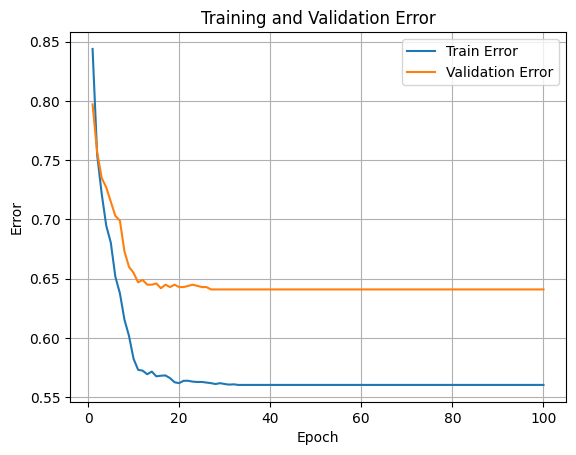

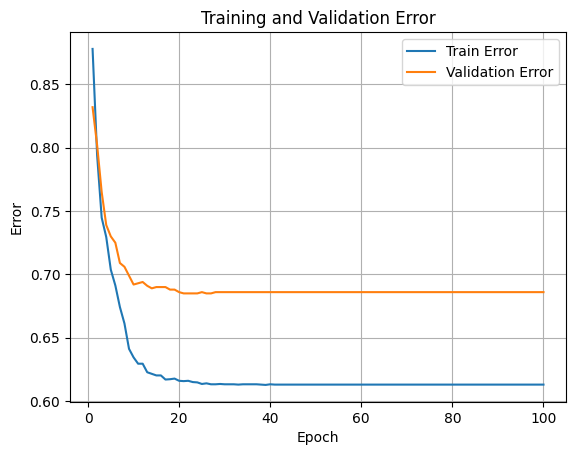

Epoch 1/100 - Train Loss: 3.9910, Train Error: 0.8438, Val Loss: 0.0675, Val Error: 0.7970

Epoch 2/100 - Train Loss: 2.2723, Train Error: 0.7548, Val Loss: 0.0563, Val Error: 0.7570

Epoch 3/100 - Train Loss: 2.0017, Train Error: 0.7218, Val Loss: 0.0522, Val Error: 0.7350

Epoch 4/100 - Train Loss: 1.8622, Train Error: 0.6947, Val Loss: 0.0499, Val Error: 0.7270

Epoch 5/100 - Train Loss: 1.7781, Train Error: 0.6805, Val Loss: 0.0478, Val Error: 0.7150

Epoch 6/100 - Train Loss: 1.7095, Train Error: 0.6518, Val Loss: 0.0463, Val Error: 0.7030

Epoch 7/100 - Train Loss: 1.6586, Train Error: 0.6378, Val Loss: 0.0454, Val Error: 0.6990

Epoch 8/100 - Train Loss: 1.6174, Train Error: 0.6155, Val Loss: 0.0444, Val Error: 0.6730

Epoch 9/100 - Train Loss: 1.5796, Train Error: 0.6018, Val Loss: 0.0435, Val Error: 0.6600

Epoch 10/100 - Train Loss: 1.5401, Train Error: 0.5825, Val Loss: 0.0428, Val Error: 0.6550

Epoch 11/100 - Train Loss: 1.5076, Train Error: 0.5733, Val Loss: 0.0425, Val Error: 0.6470

Epoch 12/100 - Train Loss: 1.5010, Train Error: 0.5725, Val Loss: 0.0424, Val Error: 0.6490

Epoch 13/100 - Train Loss: 1.4976, Train Error: 0.5695, Val Loss: 0.0424, Val Error: 0.6450

Epoch 14/100 - Train Loss: 1.4944, Train Error: 0.5717, Val Loss: 0.0423, Val Error: 0.6450

Epoch 15/100 - Train Loss: 1.4920, Train Error: 0.5677, Val Loss: 0.0422, Val Error: 0.6460

Epoch 16/100 - Train Loss: 1.4887, Train Error: 0.5682, Val Loss: 0.0422, Val Error: 0.6420

Epoch 17/100 - Train Loss: 1.4862, Train Error: 0.5685, Val Loss: 0.0421, Val Error: 0.6450

Epoch 18/100 - Train Loss: 1.4834, Train Error: 0.5662, Val Loss: 0.0420, Val Error: 0.6430

Epoch 19/100 - Train Loss: 1.4808, Train Error: 0.5627, Val Loss: 0.0420, Val Error: 0.6450

Epoch 20/100 - Train Loss: 1.4782, Train Error: 0.5620, Val Loss: 0.0419, Val Error: 0.6430

Epoch 21/100 - Train Loss: 1.4745, Train Error: 0.5640, Val Loss: 0.0419, Val Error: 0.6430

Epoch 22/100 - Train Loss: 1.4742, Train Error: 0.5640, Val Loss: 0.0419, Val Error: 0.6440

Epoch 23/100 - Train Loss: 1.4739, Train Error: 0.5633, Val Loss: 0.0419, Val Error: 0.6450

Epoch 24/100 - Train Loss: 1.4736, Train Error: 0.5630, Val Loss: 0.0419, Val Error: 0.6440

Epoch 25/100 - Train Loss: 1.4733, Train Error: 0.5630, Val Loss: 0.0419, Val Error: 0.6430

Epoch 26/100 - Train Loss: 1.4731, Train Error: 0.5625, Val Loss: 0.0419, Val Error: 0.6430

Epoch 27/100 - Train Loss: 1.4728, Train Error: 0.5620, Val Loss: 0.0419, Val Error: 0.6410

Epoch 28/100 - Train Loss: 1.4725, Train Error: 0.5613, Val Loss: 0.0419, Val Error: 0.6410

Epoch 29/100 - Train Loss: 1.4723, Train Error: 0.5620, Val Loss: 0.0419, Val Error: 0.6410

Epoch 30/100 - Train Loss: 1.4720, Train Error: 0.5613, Val Loss: 0.0419, Val Error: 0.6410

Epoch 31/100 - Train Loss: 1.4716, Train Error: 0.5608, Val Loss: 0.0419, Val Error: 0.6410

Epoch 32/100 - Train Loss: 1.4716, Train Error: 0.5610, Val Loss: 0.0419, Val Error: 0.6410

Epoch 33/100 - Train Loss: 1.4715, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 34/100 - Train Loss: 1.4715, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 35/100 - Train Loss: 1.4715, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 36/100 - Train Loss: 1.4715, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 37/100 - Train Loss: 1.4714, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 38/100 - Train Loss: 1.4714, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 39/100 - Train Loss: 1.4714, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 40/100 - Train Loss: 1.4714, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 41/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 42/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 43/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 44/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 45/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 46/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 47/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 48/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 49/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 50/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 51/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 52/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 53/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 54/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 55/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 56/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 57/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 58/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 59/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 60/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 61/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 62/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 63/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 64/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 65/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 66/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 67/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 68/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 69/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 70/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 71/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 72/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 73/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 74/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 75/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 76/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 77/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 78/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 79/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 80/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 81/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 82/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 83/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 84/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 85/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 86/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 87/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 88/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 89/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 90/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 91/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 92/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 93/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 94/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 95/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 96/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 97/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 98/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 99/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Epoch 100/100 - Train Loss: 1.4713, Train Error: 0.5605, Val Loss: 0.0419, Val Error: 0.6410

Comments