Residual Networks (ResNet)

Residual Networks is a type of deep learning archtecture designed to allow the training of deep networks. In this explainer, we will introduce the archeture of this network, then try to simplify its concepts, then at the end we will provide a simple experiment to see how using this network improves training accurcy and improve results.

We will discuss the following:

Introduction: What is Residual Networks (ResNet)?

Training deeper networks improves training accurcy and performance, however experiments showed that increasing the network depth more and more doesn’t continue to help reaching better and better results; after a certain depth, the system becomes diffecult to train. Residual connections were introduced to solve this issue, where we allow the network to “skip” one or more layers by adding shortcut connections that bypass these layers.

These shortcut (or skip) connections let the input of a layer be added directly to the output of a deeper layer. This allows gradients to flow easily through many layers. Without these connections and in a network with many layers, the gradients are repeatedly multiplied by weights during backpropagation leading to the vanishing or exploding problem. Residual connections give the gradient a direct path without the need to flow through the layer.

The network archtecture

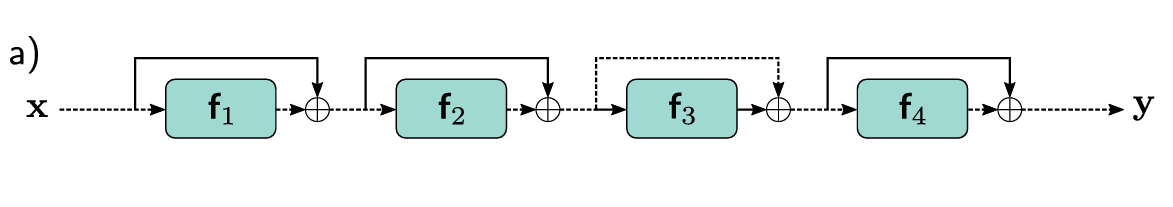

Below is a simple diagram from the book Understanding Deep Learning by Simon J.D. Prince, showing how a basic ResNet is designed:

There are many other types of residual connections. The skip connection can span more than one layer, or it can include additional operations like (1x1 convolution) to mach channel dimensions or a batch normalization.

ResNet Math

Going back to the above ResNet designe, we can see that the input \(x\) is fed to the first block \(f_1\), then added again to the output of the block resulting in a new input that is fed to the next block.

\(h_1 = x + f_1(x)\)

\(h_2 = h_1 + f_2(h_1)\)

\(h_3 = h_2 + f_3(h_2)\)

\(h_4 = h_3 + f_4(h_3)\)

Code & Example

Here is a simple code experiment for ResNet build using pytorch and MNIST-1D dataset.

See the full notebook here:

Comments